As part of the development and implementation of the learning analytics service we are undertaking some workshops with invited colleges who have expressed an interest in the new service. The purpose is firstly to confirm that the planned service focused on predictive models for learner retention and attainment are relevant and second to explore the additional analytics priorities for colleges to inform further development of the service beyond 2016.

A workshop was held in Aston Conference Centre on the 14 April and attended by 15 people including representatives from colleges and Jisc customers services, facilitated by by Paul Bailey and Shri Footring from the Learning Analytics Project.

The purpose of the workshop was to

- Prioritise the user stories for FE. We currently implement learning analytics models to predict students at risk of failing or not achieving their potential. We’d like to know what else you are interested in and agree a prioritised list we can start to explore

- Identify and prioritise the data sources and systems we will need to integrate to undertake the analytics user stories. We are currently focusing on the student information, VLE data, attendance data and library information. However we’d like to know what systems you are using that need to be integrated e.g. BKSB and prioritise the work required.

The workshop started with an overview of the priority areas around learning analytics and a brief description of the Jisc learning analytics service.

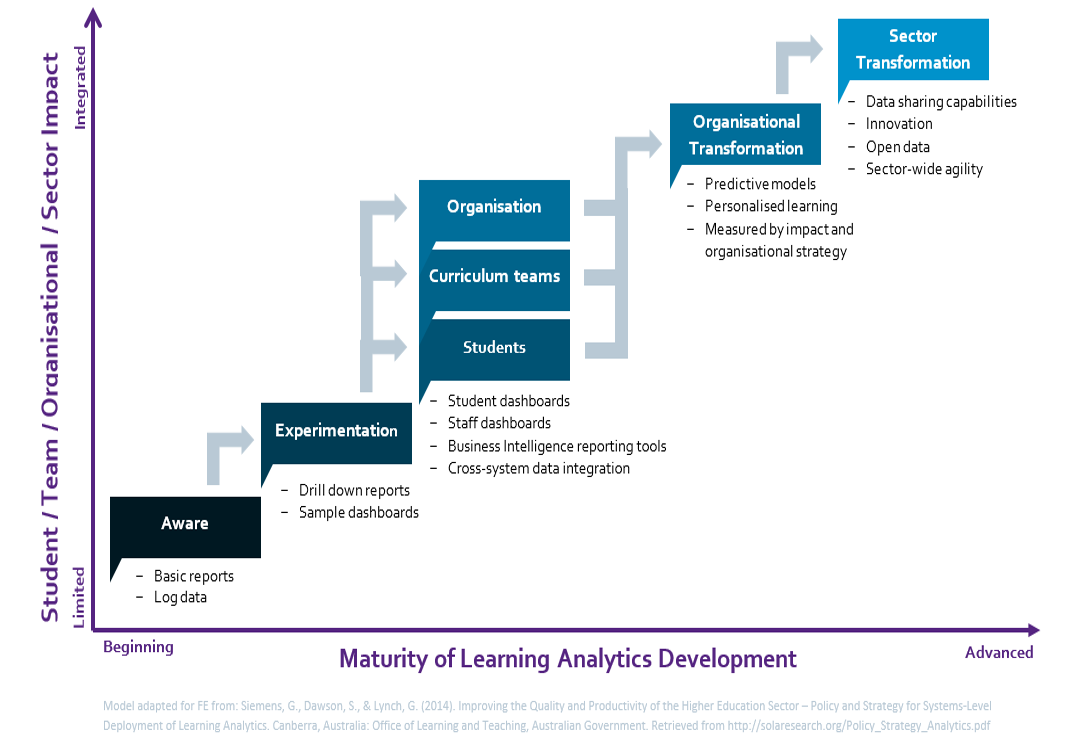

We started the workshop with an ice breaking activity where participants identified their current learning analytics activity against the Learning Analytics Sophistication Model.

Colleges demonstrated a wide range of learning analytics activities but there was a general direction of travel and interest in predictive analytics and organisation change, which is the area that the Jisc LA service is seeking to address.

User Stories for Analytics Requirements

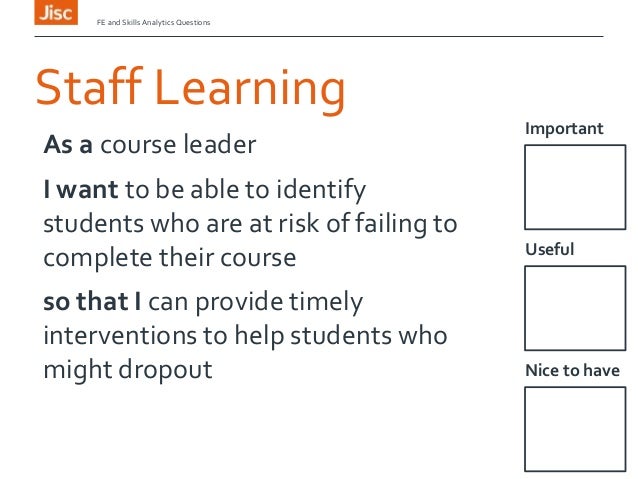

The next activity identified and prioritised a range of user stories around learning analytics. Participants created over 48 user stories that described how learning analytics could be used to improve learners, teachers or colleges.

The user stories were categorised as shown in the table below and each participant voted in the most important stories.

|

Categories |

Grouping of Stories |

Number of User Stories |

Votes |

|

Improve learner performance |

A Learning staff focused |

12 |

28 |

|

Improve learner performance |

B Intervention Management |

7 |

20

|

|

Improve learner performance |

C Learner focused |

6 |

12 |

|

Improve College Performance |

D Destinations/ Learner Journey |

10 |

27 |

|

Improve College Performance |

E Manager |

8 |

9 |

|

Improve Learning Quality |

F Improving teaching |

6 |

12 |

|

Define Criteria for College Strategy |

G. National Benchmarking |

1 |

5 |

Conclusion from the prioritisation activity

The prioritisation confirmed an interest in the areas of learning analytics being developed by the Jisc service specifically around identifying learners at risk of failure or not reaching their potential; managing effective interventions and a learner view of their engagement. In addition it also identified a requirement for analytics tools to look at learner destinations and college performance. There is also a growing interest in using national data sets for benchmarking and informing college strategy.

Data sources

The next activity looked to identify the required data and sources of data in colleges. Below is a summary.

| Internal System |

Data required |

| MIS / Student Record System | Student demographics |

| Progression Tracking | Attendance, assessment results |

| VLE | Engagement with resources, activities and assessment |

| eILP / PLP | Personal targets, achievement |

| Library | Use of resources |

| CRM | Details of employers |

| External data sources | Data required |

| SFA | Qualification success rates |

| BIS | Outcomes based success measures |

| Ofsted | A variety of data and dashboards |

| UCAS | University admissions data |

| Alis | The advanced level information system |

| Data.gov.uk | Learning aims reference service |

| HEFCE | National student survey |

| UKCES | LMI, Employer forcasts |

Grade Tracker

Roy Currie, Bedford College gave an overview of Grade Tracker and explained how he is working to integrate the tool with the Jisc learning analytics data.

Visualisations

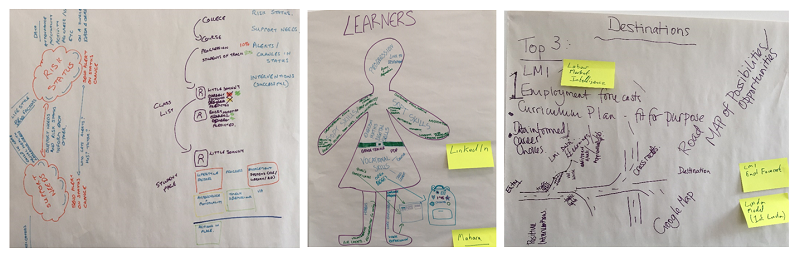

Finally participants spent some time looking at three of the groups of user stories; learners, intervention management and destinations to illustrate what these could look like and identify data sources that would be required.

Next Steps

The workshop has further confirmed that the user stories around improving learner performance are aligned with the learning analytics service that Jisc is developing. So we’ll proceed with early implementations of the service with colleges who have already expressed an interest.

We will also continue to refine and prioritise the users stories in consultation with more colleges to identify future developments to enhance the analytics service.